In the spring I learned C++ and in the summer I started writing a raytracer. I used tutorials like "Raytracing in a weekend", by Peter Shirley, and "Physically Based Rendering: From Theory to Implementation" by Pharr Humphreys. This was an independent project that I worked on while learning more about geometric optics and wave optics with Jason Murphy.

This project exposed me to computer graphics for the first time. It was also a helpful primer to understand how real world lighting can be aproximated computationally, how this affects accuracy, and ultimately the time it takes to render an image. I really enjoyed the process of building everything from a vector in 3D space. The Vec3 class described color, direction and origin of rays, radiis of spheres etc. I hope to add more hittable shapes to this implementation, and would be curious to see how a Matrix class could distill and encapsulate what I have so far. I also enjoyed understanding that each ray shot out from the camera follows a path; when it hits a given object, that object's material property describes how much it disperses, reflects or refracts. In the process, rays deposit color along their path, which recursively attribute to each pixel in a final image.

I also found that antialiasing and gamma correction were two incredibly important concepts that I enjoyed learning about. The antialiasing process of "softening" rough pixel edges makes perfect sense, and while my implementation covered the basics I could see how more adept sampling techniques could be useful. Also, realizing that gamma correction takes what the computer's "camera" senses, and translates the signals into something more readable to the human eye was a fascinating discovery. It helped me consider how the levels of information that the computer sees vs what the human eye actually perceives are vastly different, and wonder what other color spaces are possible to access with nonlinear transformations.

Some things that I will find time to keep working on would be to add a parallel projection option, add ray transformations for wider than 90 degree rays, or to account for a stereographic lens effect, and to look into how to instantiate diffractional lighting effects.

Parallel projection most likely will be the easiest of these tasks. This would entail adjusting the rays of the camera to hit ortogonally within the scene rather than projecting out at an angle. This could have reprecussions with the camera's existing focal length and depth of field, and I am excited to see how it goes.

Part of why I sought out this and last summer's research project was because I was interested in emulating a "fish eye lens" effect within a virtual space. Completing this could look like taking the existing linear rays and constructing a hemisphere or dome shaped projection if the camera angle is less than 180°. As far as I can tell, once you pass 180°, your image becomes a construction of the light that is hitting you from every angle, and you are mapping anywhere up to a full sphere of directions onto a flat surface. While both levels of distorion would require rethinking the coordinate basis that the project is built upon, I believe that focal angles within 180° are a well defined problem that I can work with, where as greater than 180° can be more fundamentally problematic.

"Diffraction Shaders" by Jos Stam was an impactful paper that computed diffraction shaders in the modeling software MAYA.While not simulating full electromagnetic waves, it demonstrated that surface texture alone can create diffraction-like effects. For my ray tracer to achieve similar results, I'd need to extend it beyond smooth surfaces to account for microscopic roughness and wavelength-dependent scattering—treating surfaces not as perfect mirrors but as complex optical elements.

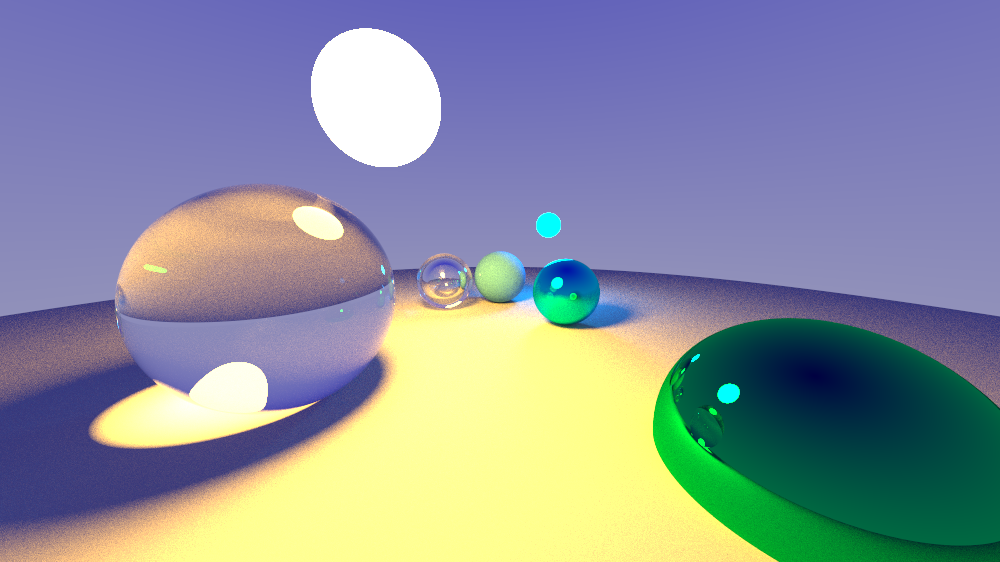

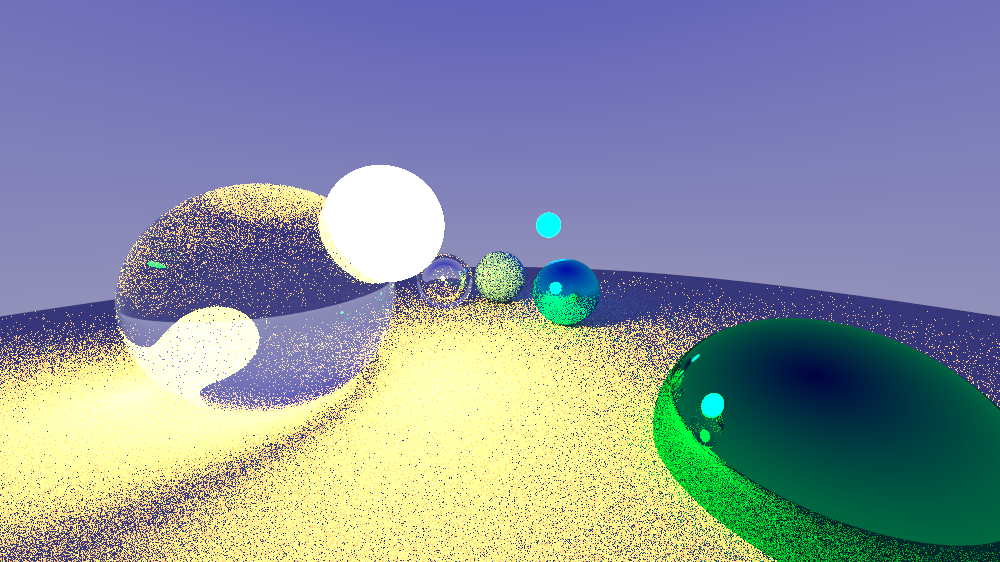

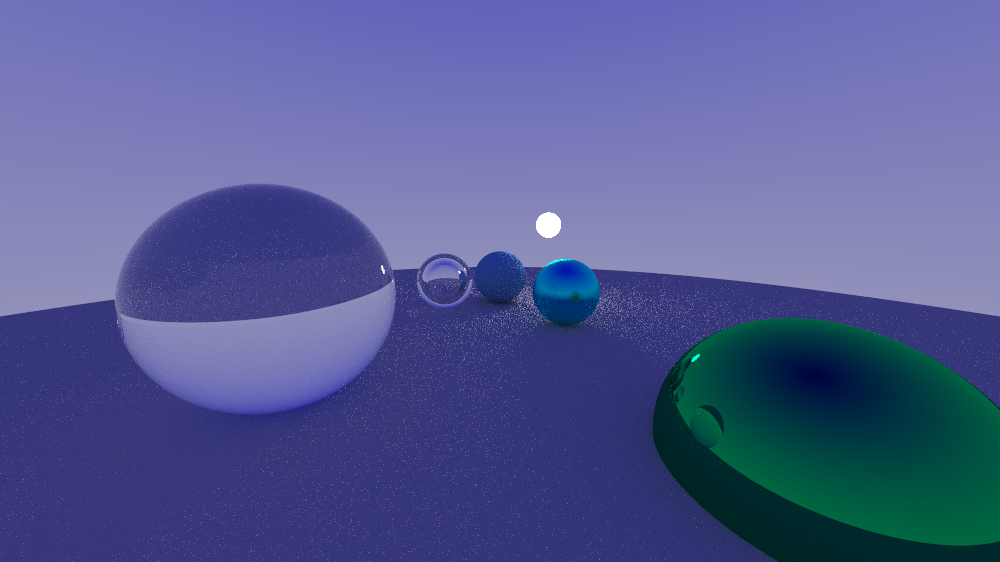

Process Photos

These are some process images, where I was experimenting with a diffuse light object's intensity, location, and antialiasing. I will also link my github repo here.